Understanding Neural Processing Units (NPU) : The Future of AI Accelerators

Yash Raj | Posted on Aug 27, 2024

Table of Contents

Abstract What is a Neural Processing Unit (NPU)? Core Concept Why NPUs are Gaining Importance? Future Enhancements Core Applications Comparison : CPU vs. GPU vs. NPUAbstract

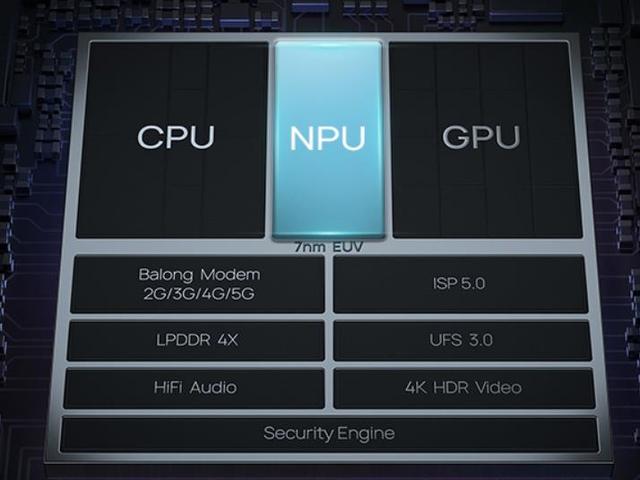

Neural Processing Units (NPUs) are transforming the landscape of artificial intelligence (AI) and machine learning (ML) by offering specialized hardware designed to accelerate AI tasks. Unlike traditional CPUs and GPUs, NPUs are optimized for the specific computational needs of AI algorithms, including parallel processing and efficient memory usage. This results in significantly improved performance and lower power consumption.

As AI models grow more complex, NPUs are becoming increasingly essential for real-time data processing in various applications, such as autonomous vehicles, healthcare diagnostics, and smart devices. The future of NPUs looks promising with advancements aimed at enhancing their integration with other technologies, supporting more sophisticated algorithms, and increasing flexibility. Understanding NPUs provides insight into their critical role in driving the next generation of AI innovations.

What is a Neural Processing Unit (NPU)?

Imagine you have a super-smart assistant who’s great at handling a specific task, like solving really complex puzzles or recognizing faces in photos. This assistant is so good at this one thing that they can do it much faster and more efficiently than anyone else.

A Neural Processing Unit (NPU) is like that super-smart assistant, but for computers. It's a special type of computer chip designed to handle tasks related to artificial intelligence (AI) and machine learning (ML). These tasks include things like recognizing images, understanding speech, or making predictions. Regular computer chips, like CPUs (Central Processing Units) and GPUs (Graphics Processing Units), are good at a lot of general tasks. But NPUs are specifically built to make AI tasks faster and more efficient. This means they can process complex data quickly and use less power compared to regular chips. So, when you see things like voice assistants or smart cameras, NPUs are often behind the scenes, making sure everything runs smoothly and efficiently.

Core Concept

At their core, NPUs are specialized hardware accelerators that execute AI algorithms faster and more efficiently than general-purpose CPUs or GPUs. They are designed to handle operations commonly used in neural networks, such as matrix multiplications and convolutions, with greater speed and reduced power consumption.

- Parallel Processing: NPUs can process multiple data points simultaneously, significantly speeding up AI model training and inference.

- Efficient Memory Usage: NPUs use advanced memory management techniques to reduce latency and bandwidth requirements.

- Customizable Architectures: Many NPUs offer configurable architectures to optimize performance for specific AI models or tasks.

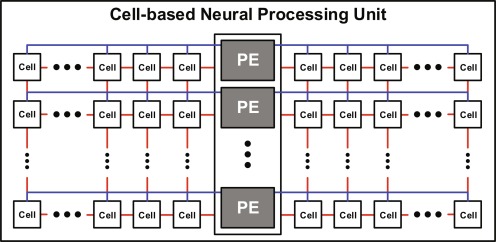

Cell-based Neural Processing Unit

At its core, this design leverages a grid-like arrangement of interconnected cells, each potentially representing a neuron or a small cluster of neurons. These cells are organized in multiple rows and columns, allowing for extensive parallel processing. The central column houses Processing Elements (PEs), which likely serve as coordination and computation hubs. The intricate network of connections—horizontal blue lines linking cells within rows, vertical red lines connecting cells across rows, and purple lines interfacing cells with PEs—enables complex data flow and information processing. This structure's modular nature, indicated by the ellipses suggesting expandability, allows for scalability to tackle increasingly complex computational tasks.

Such an architecture could significantly enhance the efficiency and capabilities of neural network computations, potentially leading to advancements in artificial intelligence and machine learning applications.

Why NPUs are Gaining Importance?

The increasing complexity of AI models and the growing volume of data have pushed traditional processors to their limits. As AI models become more complex and the volume of data grows exponentially, NPUs have emerged as a critical solution to address these challenges.

- Enhanced Performance: NPUs are tailored for AI workloads, providing faster processing speeds and higher throughput compared to CPUs and GPUs. NPUs are specifically designed to accelerate AI and ML workloads. Unlike general-purpose CPUs or even GPUs, which are optimized for a broad range of tasks, NPUs are engineered with dedicated hardware components tailored for the matrix and tensor operations fundamental to AI computations. This specialization allows NPUs to achieve faster processing speeds and increasing throughput by handling multiple operations simultaneously.

- Lower Power Consumption: Energy efficiency is a crucial consideration for deploying AI technologies. NPUs are more energy-efficient, which is necessary for scaling AI applications in mobile and edge devices. NPUs offer several benefits in optimising power usage and extending battery life.

- Scalability: NPUs support scaling AI workloads across multiple processors, allowing for more extensive and complex models to be deployed.

Future Enhancements

As the field of artificial intelligence (AI) continues to advance, the development of Neural Processing Units (NPUs) is expected to follow suit with several key enhancements. These improvements will further boost the capabilities, efficiency, and versatility of NPUs, making them even more integral to AI and machine learning applications.

- Integration with Other Technologies: Combining NPUs with other AI hardware, such as FPGAs and ASICs, to create hybrid systems that offer even greater performance moreover The convergence of NPUs with other higher computing technologies could lead to more brain-like processing architectures, improving the efficiency and adaptability of AI systems.

- Advanced AI Algorithms: Supporting more sophisticated AI algorithms and models, including those used in natural language processing and computer vision.Future NPUs will be designed to support cutting-edge AI models and algorithms, including those that require new types of computations or processing paradigms.

- Improved Flexibility: Enhancing programmability and adaptability to support a wider range of applications and use cases. Enhanced programmability will allow NPUs to be more flexible in handling various types of AI workloads, accommodating a wider range of algorithms and applications.

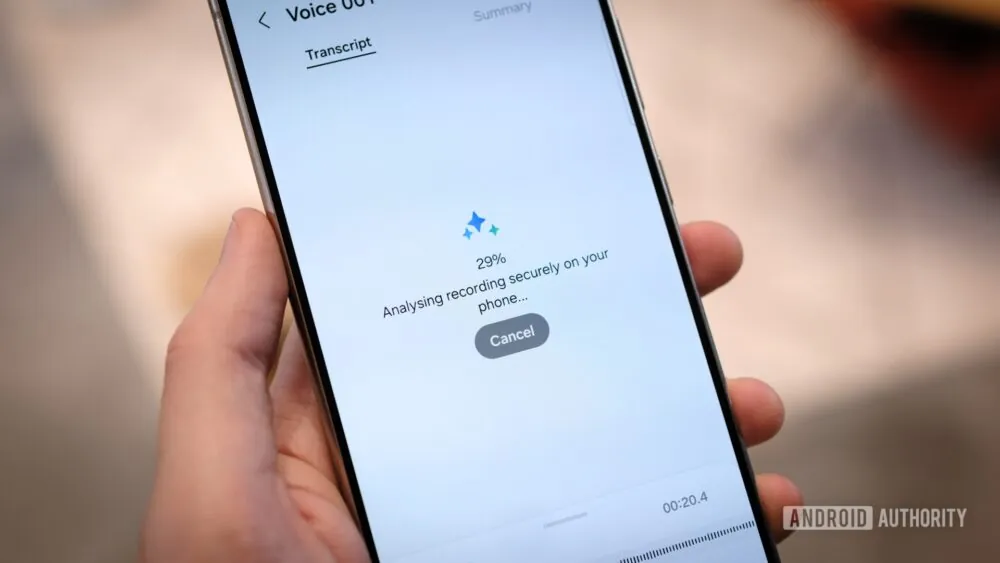

- Enhanced Security Features: Built-In Encryption like NPUs may incorporate hardware-based encryption and security features to protect sensitive data and ensure secure AI processing. Also Privacy Preservation incorporates avanced privacy-preserving technologies, such as secure multi-party computation, will be integrated into NPUs to safeguard user data during AI computations.

Core Applications

Neural Processing Units (NPUs) are rapidly becoming essential in a wide range of applications due to their ability to accelerate artificial intelligence (AI) and machine learning tasks. In the realm of mobile devices, NPUs enhance performance and efficiency by enabling advanced features such as real-time image and speech recognition, augmented reality (AR), and personalized user experiences. They are also transforming automotive technologies by powering the sophisticated systems required for autonomous driving, including object detection, lane-keeping assistance, and real-time decision-making processes. In healthcare, NPUs contribute to more accurate diagnostics and predictive analytics by processing complex medical imaging and genomics data quickly. In edge computing, NPUs enable real-time data analysis and processing on local devices, which is crucial for applications like smart surveillance, industrial automation, and IoT devices, where low latency and high reliability are critical.

Additionally, NPUs are pivotal in cloud computing environments, where they accelerate the training and inference of large-scale AI models, supporting applications from natural language processing to recommendation systems. The flexibility and performance of NPUs make them indispensable in advancing AI technologies across various sectors, enhancing capabilities and driving innovation in ways that were previously unattainable with traditional processors.

Comparison : CPU vs. GPU vs. NPU

| Feature | CPU | GPU | NPU |

|---|---|---|---|

| Primary Use | General-purpose computing | Parallel processing for graphics | AI and ML acceleration |

| Processing Speed | Moderate | High | Very High |

| Power Efficiency | Low | Moderate | High |

| Parallelism | Low | High | Very High |

| Customizability | High | Moderate | High |

Neural Processing Units represent a significant leap forward in AI hardware technology. By offering specialized capabilities tailored to the needs of AI and machine learning, NPUs are set to play a crucial role in the future of computing. As technology continues to advance, NPUs will likely become more prevalent, driving innovations across a wide range of industries and applications.

References

[1] Image source: "Microsoft" Do more with surface what are NPUs?

Visit Article

(accessed Aug 27, 2024).

[2] Chapter Seven - Architecture of neural

processing unit for deep neural networks

Author links open overlay panel

-Prof. Kyuho J. Lee.

Visit Article

(accessed Aug 27, 2024).

[3] Why your next laptop will have an NPU, just like your phone.

Just what is that NPU in your new laptop or smartphone doing?

-Robert Triggs.

Visit Article

(accessed Aug 25, 2024).

[4] "Qualcomm" The NPU is built for AI and complements the other

processors to accelerate generative AI experiences. -Durga Malladi, Pat Lawlor.

Visit Article

(accessed Aug 26, 2024).

[5] Literacy Automotive Intelligent To understand CPU, GPU, NPU, DPU,

MCU,

ECU immediately.

-Elaine Zhong.

Visit Article

(accessed Aug 27, 2024).